10 Apr Problem Defining and the Consulting Process

by Ralph H. Kilmann and Ian I. Mitroff

This article was originally published in California Management Review, Vol. 21, No. 3 (Spring 1979), pages 26–33; reprinted in Gore, G. J., and R. G. Wright (Eds.), The Academic/Consultant Connection (Dubuque, Iowa: Kendall/Hunt, 1979), 71-80.

INTRODUCTION

Intervention theory [1] and the consulting process [2] have developed to provide more effective methods by which organizational change is conducted. These methods have emerged in order to operationalize a theory of changing rather than a theory of change. The latter is what Bennis [3] found to be the focus of most discussions on organizational growth and change: yet a theory of changing is needed to create planned change in organizations and not just to explain natural change after the fact [4].

Furthermore, while one can now document many different approaches to changing organizations [5], and hence, to further intervention theory and the consulting process, little attention has been paid to whether these different methods are solving the organization’s real problems. In other words it appears that each consultant or interventionist applies his own type of method to the problems of the organization [6], without careful consideration as to whether that type of method is indeed suited for the organization’s particular problems [7]. Of course, most consultants do assert that it would be unethical to use change methods, including their own, if these were not appropriate for the organization (that is. if these would not solve the organization’s problems). But one must consider whether the consultant can really be objective in making such a decision, especially if, like most consultants, he is trained only in one or at most two disciplines and therefore cannot assess whether other approaches to change would really be better suited to the problem situation.

It is natural, for example, to expect that a behavioral science consultant trained in interpersonal relationships and group dynamics will perceive that the inefficiencies in the organization are caused by less than adequate interpersonal relationships and group processes; that a consultant specializing in organization design may view the same situation as being caused by ineffective organization structures; and that an industrial engineer would see the identical situation as stemming from man-machine interface problems or the inefficient flow of work materials. The same “selective perception” and biases could also be expected from consultants specializing in operations management, marketing, management information systems, and so on.

The major point of this article is that given the proliferation of methods of changing, the next step in better understanding and explicating intervention theory and the consulting process should include efforts to minimize the Type III error defined as: the probability of solving the wrong problem [8]. As the preceding discussion has suggested, this means that the consultant who is brought into the organization needs some method or procedure for determining more explicitly and objectively whether his or her approach to changing the organization is best suited to the type of problems actually experienced by the organization. Consequently, assessing and managing the Type III error is clearly a major step in the consulting process, and the remainder of this article presents a problem defining/solving perspective and general methodologies so that this critical error will not undermine the potential usefulness of available change programs.

CONSULTING AS PROBLEM DEFINING

The first way of helping to assure that the Type III error will definitely be considered in consulting efforts is to conceptualize the consulting process more explicitly in problem formulation terms. While all consultants undoubtedly realize that they are trying to solve or manage some organizational problem they often: (1) are quite implicit as to what or who defines the problem to be addressed; (2) assume that the top manager or individual who has hired them “knows” what the problem is and that is why the particular consultant was contacted; (3) quite naturally perceive, as discussed above, that the problem facing the organization is based on their area of specialization.

Even the literature on problem solving (such as cognitive psychology) has traditionally emphasized the solving part of the process, and has assumed that the individual either “knows” what the problem is or is presented with the problem by some other person [9]. Very little attention has been given to problem finding, problem formulating, or problem creating [10]. However, conceptualizing the consultant first as a problem definer, and then as a specialist in some substantive or disciplinary area (problem solver), places the consultant in the role of primarily helping the organization to be sure that whatever problems it senses are defined correctly. Then various special change approaches can be applied to solve or manage the defined problem, rather than solving the implicit or assumed, and perhaps the incorrect, problem.

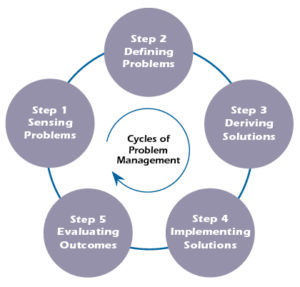

Figure 1 shows this conceptualization of consulting as problem defining as well as solving by picturing five genotypic steps: sensing the problem, defining the problem, deriving solutions to the problem, implementing particular solutions, and evaluating the outcomes of the implemented solutions. The cycle then repeats by noting whether a problem is still sensed. If so, the process continues. Certainly one could list other decision or action steps in the consulting/intervention process (such as choosing various strategies for implementing solutions or choosing methodologies for evaluating outcomes), but these could be incorporated into five steps.

FIGURE 1

The Consulting/Intervention Process: The Five Steps of Problem Management

One would logically expect that the consulting process starts with Step 1, where one or more individuals in the organization sense that something is wrong or that some problem exists. A problem is here defined as a discrepancy between what is (such as current performance) and what could or should be (such as organizational goals). Once the gap between the “what is” and “what should be” goes beyond some threshold, then one experiences the existence of a problem. For example, the turnover rate in an organization may be 15 percent per year, which could be expected given the nature of the labor force and the general mobility in the industry. However, if the turnover rate became as high as 40 percent, individuals may very well perceive some problems, for this rate significantly exceeds their expectations or threshold levels. This type of sensing or experiencing is analogous to what has been called the “felt need for change”[11].

Once it is sensed that some problem exists, Step 2 in this process is to define just what the problem is. For example, is the problem one of interpersonal conflict, organizational climate, job design or organization design, member skill inadequacy, or something else? In fact, all these potential problem definitions could be germane to the cause of a high turnover rate mentioned above. Problem definition, however, is usually even more complicated, since a number of problems may be sensed that have broken the threshold on sales, profits, costs, absenteeism as well as turnover, grievances, market share, returned products, or on any other “indicators” by which individuals sense whether a problem exists or not.

But how do members of the organization—with or without the aid of a consultant—define what the basic problem is that is causing the various sensings? Surprisingly, very little is known or understood about the process by which problems are defined, let alone having rather precise methodologies to define problems in the most efficient and effective manner. More often than not, it seems that individuals (including consultants) assume that their view of the world (their specialty) defines the essence of the problem.

Alternatively, some top manager or person “close to the problem” defines what it is, and all attention, including the time and energy of the consultant, is devoted to solving the problem as defined. And when someone asks, “Why are you implementing that particular change program?” the response is, “Because such and such is the problem.” “And why is that the problem?” The response follows, “Because my boss or the consultant says so.” Given the earlier discussion regarding the biases of academic and functional specializations, one might wonder if such implicit problem definitions do not typically result in significant Type III errors—solving the wrong problem instead of the right problem. There is even evidence that a particular definition of a problem is applied because it has always been done that way, even if the problem never is resolved [12]!

Deriving solutions, implementing solutions, and evaluating outcomes (Steps 3, 4, and 5 of the consulting process) follow directly from the definition of the problem. It should be evident, however, that the careful planning and execution of the later steps (problem solving) are irrelevant if the wrong problem definition was developed. This again illustrates the importance of the problem-defining process, for little good results from devoting time, energy, and resources to implementing change programs that are the wrong change programs for the organization’s problem. This would become evident once the organization evaluated the outcomes of the change effort (Step 5) only to find that the problem is still apparent. While new problems may have emerged during the course of implementation, it may also be an indication that an inappropriate change program was applied.

It is interesting to note that Evans [13] has estimated that less than 10 percent of organizational development programs include an evaluation component. Obviously, it is difficult even to estimate whether any problems were actually resolved if the evaluation of outcomes is not conducted. It would also be difficult to distinguish whether new problems arose or whether the initial problems were incorrectly defined if no evaluation were conducted and the organization still sensed the existence of problems following the implemented change program.

Of particular importance concerning the implications of Figure 1 is that most consultants probably enter the consulting process in Step 3 and not during Step 1 or 2. In other words, consultants are often contacted to solve already defined problems. Basically, they are asked to utilize their expertise to derive solutions for such predefined problems. Perhaps this is why a consultant of a particular specialty was contacted by the organization since it had already been determined what the problem is. Another reason for contacting a consultant, however, is that the organization has not only “defined” the problem but has also determined what the solution ought to be. In the latter case, the consultant is brought into the organization in Step 4 to help plan and implement a change program, only because of his or her expertise in implementing just that type of program.

The more that the consultant enters into the process shown in Figure 1 at the later steps, the more likely that the organization and the consultant will commit a significant Type III error. It thus seems reasonable to expect that bringing in the consultant in Step 4 (implementation) is more likely to result in a Type III error than having the consultant enter in Step 3 (deriving solutions), if only because the earlier the entry the less commitment and inertia have developed to a particular problem definition. But the best approach, according to the philosophy and intentions of the processes shown in Figure 1, would be for the consultant to enter in Step 1, while the organization is sensing that something is wrong or at least during Step 2, as efforts or assumptions are being made of the problem definition. But once the process of solving and implementing solutions is under way, it is difficult for the consultant to re-question the organization’s problem definition or to have the organization change direction in addressing its problems.

AN ANECDOTE: THE ELEVATOR PROBLEM

Perhaps an anecdote would help to illustrate the important distinctions of problem defining versus problem solving [14]. This story takes place in a large hotel where the hotel manager began receiving more and more complaints from hotel guests, concerning the long waits for elevators. Having sensed there was a problem (from the many complaints), he contacted an engineering consultant for a solution to the problem. Naturally, the consultant defined the problem implicitly as a technical one, in fact it seemed as though the problem definition was not even an issue, it was simply assumed. The engineer suggested two alternatives for a solution: speed up the existing elevators or install new elevators. Both of these involved a very high cost.

As the hotel manager was weighing these alternative solutions, he shared the problem with another person—a consultant with a background in psychology. This person suggested that the problem might be a human one, that the perception of time is subjective and perhaps the guests complain about the wait for elevators because they do not have anything else to do while time passes. Defining the problem in these terms suggested two solution alternatives: place mirrors in the hallways by each elevator entrance on each floor or place display cases there that contain interesting and informative materials or news items. These latter alternative solutions were much cheaper than the first two.

Using this anecdote illustrates how a different problem definition (human versus technical) not only addresses the problem situation quite differently, but does so with entirely different solution alternatives. Choosing the psychological-type definition or engineering-type definition is an example of a Type III error consideration (such as choosing a whole decision tree), whereas once a given definition is chosen, the choice of alternative solutions is an example of Type I and II errors (such as choosing a branch of the given decision tree). While the rightness or wrongness of the problem definition in this anecdote may come down to money considerations primarily, in other problem solutions the issues might also include morale, public image, ease of implementation, and other intangible yet real consequences of alternative problem definitions.

As shown in Figure 1, however, implementation (Step 4) can also fail to achieve desired results. Basically, the members in the organization, for many reasons, may not accept the implemented solution, may resist it, modify it, or even sabotage it. By not planning for implementation and by not anticipating obstacles, resistances, and forces operating to keep things the same, results in the Type IV error: the probability of not implementing a solution properly. However, most approaches to planned change actually concentrate on the implementation of solutions, explicitly recognizing and dealing with the various resistances and problems with change.

Returning to use the example of the “elevator problem,” a Type IV error would be made if management had selected the solution of installing mirrors at each floor, but the mirrors that were installed were aesthetically unappealing to be used much by waiting persons, or if the mirrors were too small, were not located in convenient places by the elevators, or if the halls were too dark to allow appropriate use of the mirrors. Certainly the assumption behind installing the mirrors and managing the Type III error was that the mirrors would be used while people waited for the elevators. By ignoring the logistics and psychological factors that affect the use of the solution, a Type IV error is committed. Alternatively, even if the solution of installing more elevators were chosen, it would be important that the new elevators would be experienced as safe, pleasant, and efficient; otherwise, the people in the hotel may prefer to wait for the old elevators—thereby demonstrating a Type IV error on top of a Type III error.

Incidentally, the hotel manager in this anecdote did choose the psychological definition for his problem and actually installed the mirrors. This seemed to solve the problem, since the great volume of initial complaints no longer was evidenced. This also suggests that the mirrors were installed properly during implementation.

METHODOLOGIES FOR PROBLEM DEFINITION

Extrapolating from the elevator anecdote, it would seem that intervention theory and consulting approaches are much more built around managing the Type IV error (intervention as almost synonymous with implementation), while the Type III error, as discussed throughout this article, is typically ignored or assumed away. Thus, the need is for actual methods by which the organization and consultant can be sure that they have defined the organization’s problems appropriately. It would be nice, for example, to have some well-defined statistic that indicates the correct problem definition of several proposed ones, but this is not the case. Problem definition by its very nature is an ill-defined, complex, and ambiguous problem in its own right, and consequently, only subjective estimates can be made regarding the likelihood of a Type III error.

Given the current state of the art of problem definition methods, the best that can be done is as follows:

(a) Formulate several, if not many, different definitions of the problem situation.

(b) Debate these different definitions in order to examine critically their underlying assumptions, implications, and possible consequences.

(c) Develop an integrated or synthesized problem definition by emphasizing the strengths or advantages of each problem definition while minimizing the weaknesses or disadvantages.

(d) Include those persons in (a), (b), and (c) who are experiencing the problem, who have the expertise to define problems in various substantive domains, whose commitment to the problem definition and resulting change program will be necessary in order for that program to be implemented successfully, and who are expected to be affected by the outcomes of any change program attempting to solve or manage the felt problem.

These guidelines are founded on the philosophy of science, inquiring systems [15], which examine the alternative ways of inquiring into the nature of human phenomena. Specifically, (a) is derived from the Kantian Inquiring System, where the objective is to provide the “decision maker” with at least two different views of the problem situation; (b) is rooted in the Hegelian Inquiring System, emphasizing that a debate among the two most opposing views of a problem is necessary in order to uncover their underlying assumptions; (c) is attempting to foster the Singerian-Churchmanian Inquiring System that seeks to apply a systems approach in synthesizing opposing viewpoints. However, (d) is supported by the literature on participative management [16], and the need to generate valid information, free choice, and commitment in order to bring about effective problem solving and change efforts [17].

In general, the better that (a), (b), (c), and (d) are conducted and generated, the less likely a Type III error will be committed. Certainly, this error can be expected to be greater if the organization and consultant had merely accepted some stereotyped view of the problem or one person’s view (such as the top manager), and had not engaged in any debate of alternative problem definitions at all.

Guidelines (a) through (d) can be operationalized in a number of ways that provide the consultant and the organization with alternative methods for defining the organization’s problems. In particular, having different groups of individuals develop different problem definitions, having these groups debate (across groups) their reasons for defining the problem in such different ways, and then having representatives from the groups meet to synthesize the different viewpoints into a single problem definition has proved to be an effective consulting approach [18]. Utilizing groups as a medium for the problem defining process builds upon the group’s ability to generate support and commitment to its viewpoints [19], and the debate between groups tends to foster intense interactions and intergroup challenges [20]. The synthesis step (c) is probably the most difficult to achieve, but under the right climate and setting, an effective intergroup resolution of differences can take place [21].

The question then becomes, how should the groups be composed to define, debate, and synthesize the problem definition? As noted under (d) above, it is important to include all individuals (or their representatives) who may have expertise in the areas relevant to the sensed problem domain, whose commitment is necessary for any change program to be implemented, and who may be affected by the outcomes of the change program. Naturally, this would involve individuals throughout a particular department or the entire organization (depending on the expected scope of the sensed problem), perhaps representatives from certain environmental concerns or clients, and one or more consultants.

Once the participants for the problem-defining process are identified, there are still a number of ways of composing the groups. Some methods which have been utilized include forming homogeneous groups according to personality types, vested interests, individual problem concerns, area of specialization, level in the hierarchy, age, race, or on any other group composition dimension which is expected to result in different problem definitions—the objective being to formulate several very different views of the problem situation, making sure that such differences (and their underlying assumptions) will surface in order to minimize the Type III error.

Regarding the elevator problem discussed earlier, the following methodology for problem definition could have been used to minimize the Type III error (and possibly the Type I, II, and IV errors as well). First, the hotel manager would gather together fifteen to twenty persons, including some engineers, psychologists, industrial designers, interior decorators, and several hotel guests who were experiencing the problem. These individuals would then be placed in homogeneous groups (all engineers in the same group, and so on). Each group would be asked to develop a definition of the problem. A debate among groups would then be fostered in order for each group to be able to challenge the assumptions and positions of every other group.

Once the debate seemed to have generated a deeper understanding of all the different perspectives, an integrated group would meet (one or two representatives from each group), to resolve their differences and to select a single, or develop a synthesized, problem definition for the hotel manager. This definition would then guide the subsequent efforts at generating alternative solutions, strategies for implementing the chosen solution, and methods for evaluating outcomes—thus proceeding through all steps of the consulting/intervention process shown in Figure 1. Naturally, for simple problems that do not have important consequences for the organization, it would not be efficient to devote all the time and resources to go through this whole process. For important, complex problems, however, the use of these resources is deemed quite necessary to manage the critical Type III error.

SUMMARY AND CONCLUSIONS

An attempt has been made to highlight a key aspect of the intervention/consulting process that has been largely overlooked in the literature and practice in the area: the issue of defining the organization’s problems as correctly as possible before extensive efforts are applied to designing and implementing various change programs. By conceptualizing the consulting process more explicitly in terms of problem defining in addition to problem solving suggests that the former should be the first priority of a consultant, and that the latter should utilize the expertise of the consultant only when it fits with the appropriately defined problem.

A number of guidelines have been given which seek to minimize or at least manage the Type III error: solving the wrong problem instead of the right problem, or alternatively, solving the least important problems given the limited resources of the organization. Also included was an indication of how different group compositions could aid in developing several very different problem definitions-to be defined, debated, and synthesized across the different groups.

While it was suggested that a number of different individuals should be included in the problem definition process, perhaps it should be evident why a single consultant can be expected to be considerably less effective than a team of consultants. One reason, of course, is that a single consultant brings just his or her own viewpoints and area of expertise to the organization even if he or she is able to design and foster the type of problem definition process that has been described. This can be somewhat circumvented by the consultant bringing in others once the problem definition is developed and additional areas of expertise identified. But this means that these potential areas of expertise were not utilized in the important process of first defining the problem.

Because organizations are becoming more and more complex and because the environments of organizations are posing more ill-defined and ambiguous problems than ever before [22], it is becoming more important for organizations to bring together diverse areas of expertise—because such complex problems are multifaceted, requiring interdisciplinary approaches to resolving or managing them. Consequently, an important conclusion to this article, given the problem defining perspective described here, is the recognition that single efforts at consulting are more likely to be ineffective other than for simple, routine problems. For complex problems, where the Type III error is more likely to be committed, multiple, coordinated efforts among diverse consultants would appear to be the more effective approach. Perhaps the latter is the greatest challenge to enhancing the consulting/intervention process because it most violates disciplinary education and the current focus on problem solving instead of first focusing on problem defining.

REFERENCES

[1] C. Argyris, Intervention Theory and Method (Reading, MA: Addison-Wesley, 1970).

[2] R. R. Blake and J. S. Mouton, Consultation (Reading, MA: Addison-Wesley, 1976).

[3] W. G. Bennis, Changing Organizations (New York: McGraw-Hill, 1966).

[4] W. G. Bennis, K. D. Benne, and R. Chin (eds.), The Planning of Change, 2nd ed. (New York: Holt, Rinehart and Winston, 1969).

[5] E. F. Huse, Organizational Development and Change (St. Paul, MN: West, 1975).

[6] E. H. Schein, W. G. Bennis, and R. Beckhard, Organization Development Series [(Reading, MA: AddisonWesley, I969).

[7] R. H. Kilmann, Social Systems Design: Normative Theory and the MAPS Design Technology(New York: Elsevier North-Holland, l 977).

[8] Type l and Type II errors refer to the traditional statistical errors of accepting the alternative hypothesis when the null hypothesis is true and accepting the null hypothesis when the alternative hypothesis is true, respectively. The Type III error questions whether the null and alternative hypotheses are in fact the right “problems” that should be tested in the first place. See: I. I. Mitroff and T. R. Featheringham. “On Systematic Problem Solving and the Error of the Third Kind,” Behavioral Science (I974), pp. 383-393.

[9] A. Newell, H. A. Simon, and J. C. Shaw, “Elements of a Theory of Human Problem Solving,” Psychological Review (1958), pp. 151-166.

[10] W. F. Pounds, “The Process of Problem Finding,” Industrial Management Review (1969), pp. 1-19; H. J. Leavitt, “Beyond the Analytic Manager,“ California Management Review (Spring and Summer 1975).

[11] R. Lippit, J. Watson, and B. Westley, The Dynamics of Planned Change (New York: Harcourt, Brace, 1958).

[12] Kilmann, op. cit.

[13] M. G. Evans, “Failure in OD Programs—What Went Wrong?” Business Horizons (I974), pp. 18-22.

[14] This anecdote is modified from R. L. Ackoff, “Systems, Organizations, and Interdisciplinary Research,” General Systems Yearbook (1960), pp. 1-8.

[15] C. W. Churchman, The Design of Inquiring Systems (New York: Basic Books, 1971).

[16] H. J. Leavitt, “Applied Organizational Change in Industry: Structural, Technological and Humanistic Approaches,” in J. G. March (ed.), Handbook of Organizations (Chicago: Rand McNally, I965), pp. 1144-1170.

[17] Argyris, op. cit.

[18] I. I. Mitroff, V. P. Barabba, and R. H. Kilmann, “The Application of Behavioral and Philosophical Technologies to Strategic Planning: A Case Study of a Large Federal Agency,” Management Science (1977), pp. 44-58.

[19] Huse, op. cit.

[20] R. R. Blake and J. S. Mouton, Group Dynamics—Key to Decision Making (Houston: Gulf, 1961).

[21] J. A. Seiler, “Diagnosing Interdepartmental Conflict,” Harvard Business Review (1963), pp. 121-132.

[22] A. Toffler, Future Shock (New York: Bantam, 1970).